New extensively drug-resistant variants of an ancient and deadly disease—typhoid fever—are spreading across international borders. Cases have been reported in Pakistan, India, Bangladesh, the Philippines, Iraq, Guatemala, UK, US, and Germany, as well as more recently in Australia and Canada. In recent years, drug resistant and travel-associated typhoid variants have also been spreading through the African continent. Under-reporting and international surveillance gaps mean that drug-resistant typhoid is probably even more extensive than we think.

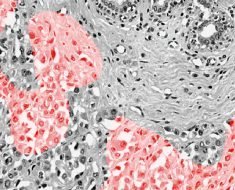

Causing fever, headache, abdominal pain and constipation or diarrhoea, typhoid is a bacterial disease. Salmonella enterica serovar Typhi—the organism behind typhoid—kills up to one in five patients if left untreated. S. Typhi spreads from person to person in water and food, which have been contaminated by faeces. As a consequence, typhoid is often associated with inadequate sanitation and water systems, as well as with poor hygiene practices.

The rapid rise of increasingly difficult to treat typhoid is a very worrying prospect. During an age of unparalleled international trade and travel, it is inevitable that any regional rise of antibiotic resistance will have global knock-on effects.

In Europe, Australia and North America isolated extensively drug-resistant variants (or XDR strains) were travel-related. Travellers had become infected while visiting Pakistan, where a large-scale outbreak of XDR typhoid is ongoing. Having caused at least 5,274 cases in the Sindh Province since 2016, the Pakistani XDR strain is proving resistant to all commonly available antibiotics except for one: azithromycin.

The coming years will likely see further travel-related resistant cases occur throughout the world. In Britain, strong demographic and historical ties to South-East Asia mean that about 500 typhoid cases (mostly travel-associated) are reported every year. In the US, at least 309 cases occurred in 2015 with almost 80% of confirmed cases reporting a history of travel to endemic areas. In Germany, 56 cases were reported in 2018—96% of which were travel associated.

The return of typhoid is something of a shock to health systems in richer countries. Between the late 19th century and the 1950s, sanitary improvements, effective vaccines and antibiotics eliminated endemic typhoid from most high-income countries. But after a lifetime of relative security, the prospect of typhoid again causing death in high-income hospitals is no longer an outlandish idea.

So how did this happen? The answer is uncomfortable and tied up in the inward-looking nature of Western disease eradication campaigns over the last century. Because, contrary to popular conceptions of typhoid as a disease of the past, typhoid never really left. As our new research shows, because typhoid control often stopped at high-income borders, it became a neglected disease in other, poorer countries. This global neglect is now proving costly.

Controlling typhoid depends in part on new technologies to prevent, diagnose and treat the disease. But it is also crucial that we keep a clear eye on the past so that we are able to rewrite the policies that enabled typhoid’s resurgence—in other words, old mistakes should not be repeated.

Killer of paupers and kings

Genomic analysis and archaeological evidence makes it clear that the disease has been circulating in human populations for millennia.

While we cannot make accurate retrospective diagnoses using written sources alone, typhoid has been referenced as the mysterious killer of princes, presidents and paupers around the world. Typhoid was also a renowned scourge of armies and war. During the Second Boer War (1898-1902), the British Army reported more than 8,000 typhoid deaths.

Despite its prominence, typhoid’s cause and mode of transmission remained a mystery. Many experts initially believed that typhoid was caused by “bad air” originating from decaying matter and pungent-smelling filth. There was also no clear way to distinguish typhoid from other contemporary fevers. Modern notions of typhoid as a disease with a distinct clinical picture, a mostly water and food-borne mode of transmission, and with a bacterial cause, only gradually emerged during the 19th century after repeated pandemics of cholera kick-started investigations into waterborne modes of transmission.

The emerging concept of typhoid as a distinct bacterial disease that could be carried by contaminated water and food was accompanied by a parallel revolution of sanitary infrastructure in Europe, North America and parts of Asia, Africa, South America and Oceania. New waterborne ideas of typhoid transmission subsequently played an important role in justifying ongoing expenditure on improved sewage and drinking water systems.

Alice in Typhoidland

For example, in the British university city of Oxford, sanitarians like Henry Liddell, Dean of Christ Church College and father of Alice Liddell—the girl who inspired Alice in Wonderland, Lewis Carroll’s famous children’s book—used the spectre of typhoid to lobby for radical interventions into the city’s infrastructure and hydrology.

He did this with his close friend Henry Acland, professor of medicine, physician to the royal family (and alleged inspiration for the White Rabbit).

Liddell, whose wife had nearly died from typhoid in London, also oversaw improvements of his college’s grounds and sanitary infrastructure. This included redirecting the Trill Mill Stream – an open sewer—underground in 1863, the same year that Carroll began to write his first iconic book.

Although initial sanitary progress in Oxford was slow, growing public criticism, new government credits and scandals like the death of three undergraduates from typhoid encouraged city and university authorities to take decisive action during the 1870s. Within little over a decade, they constructed a new sewage system, closed down leaking cesspools, stopped pumping drinking water from below the main sewer outlet and created an affordable rate-financed and municipally-owned filtered drink water supply.

The case of Oxford is far from unique. By the turn of the century, high-income cities across the world were investing substantial amounts of money in their water and sanitary infrastructures. While early interventions were often hit-and-miss and could vary significantly between cities, there is a clear correlation between rising expenditure on the provision of safe water services and declining mortality from waterborne diseases like typhoid.

From prevention to eradication

New technologies further aided attempts to curb what was increasingly described as a preventable disease. In 1897, Maidstone became the first British town to have its entire water supply treated with chlorine.

Vaccination emerged as another way to protect populations in areas without sanitary infrastructure. Devised by German and British researchers in 1896, early typhoid vaccines consisted of killed typhoid strains and were among the first bacterial vaccines. During the Second Boer War, British troops leaving the fold of “civilisation” could opt for inoculation against typhoid. This first roll-out of heat-killed vaccines was marred by quality problems and adverse side effects that made early vaccination extremely unpleasant. But by World War I, all major powers used improved bacterial typhoid vaccines to effectively protect troops and travellers.

Typhoid’s emerging status as a preventable disease was celebrated as a great success story of “rational Western science”. It also led to calls to move from prevention to eradication. Leading the hunt was the new profession of bacteriology.

This research soon showed that typhoid was far more complex than initially thought. Although its mode of transmission via water and contaminated food was becoming increasingly clear, it emerged that the bacterium could also be excreted by seemingly healthy people. So-called asymptomatic—or healthy—carriers have no symptoms but can still excrete S. Typhi through their faeces for years after the initial infection.

This concept of healthy carriers, advanced by the German bacteriologist Robert Koch in 1902, significantly complicated hopes for typhoid eradication. How was one supposed to deal with seemingly healthy members of the community, whose typhoid-contaminated faeces could put others at risk?

Answers reflected prevailing socio-cultural values. While most typhoid carriers were allowed to remain in their communities if they agreed to follow precautionary hygiene measures (abstaining from working in food preparation and waterworks), some were forcibly detained and isolated. Decisions about who could be trusted and who had to be isolated were far from neutral and reflected contemporary concerns about immigration, racism, chauvinist gender norms and rising militarism.

For example in Germany, bacteriologists tried to “cleanse” military deployment zones identified for an attack on France by testing communities, creating lists of carriers, and placing some in mandatory isolation from around 1904 onward. While communities in the centre of the Reich mostly escaped this practice, Prussian experts had few qualms about implementing mandatory isolation in the Franco-German periphery on the grounds of military need. During World War I, German soldiers were routinely screened for typhoid and strict controls were set up to stop potential carriers—like soldiers or displaced civilians—from infecting civilian populations in Germany. Once again not everybody was treated equally, with certain groups like Eastern Jews being disproportionately accused of carrying diseases of “filth” like typhoid.

In the United States, Irish immigrant Mary Mallon (who became known as “Typhoid Mary”) became the most prominent typhoid carrier to be detained after infecting the families she cooked for. Mallon was quarantined between 1907 and 1910 and again between 1915 and her death in 1936 after breaching the terms of her initial release and working as a cook under an assumed name.

British authorities, meanwhile, detained predominantly female carriers deemed mentally incapable of upholding sanitary standards in the Long Grove Asylum in Epsom between 1907 and 1992. Doubts about the women’s alleged insanity subsequently emerged.

But with typhoid continuing to decline in high-income countries, such treatment of carriers rarely made headlines. By the end of World War II, there was instead growing optimism about the prospect of eventual typhoid elimination. In Europe and North America, functioning sanitation systems, chlorination, fine-grained national surveillance for typhoid outbreaks and carriers by public health authorities, vaccines, and the advent of effective therapies for both typhoid victims (chloromycetin, 1948) and carriers (ampicillin, 1961) had turned the once feared disease into a negligible health threat.

Although individual outbreaks on ocean liners, in resorts, and occasionally towns, continued to attract public interest, typhoid was increasingly portrayed as a disease of the past, one which had been defeated with heroic sanitary and medical interventions. During an age of widespread confidence in the imminent scientific defeat of infectious disease, there seemed little reason to fear its return.

An infectious divide

This confidence was misplaced. While typhoid had almost vanished from high-income countries, it remained endemic in other parts of the world.

Over the next half century, the resulting infectious divide was reinforced by a relative neglect of international campaigns to tackle typhoid. Sustained large-scale investment in the supply of safe drinking water, safe sewage disposal, and basic healthcare services would have gone a long way to curb not only typhoid but many other diseases in the Global South.

But actual investment often remained ad hoc, uncoordinated, and insufficient. Instead, many rich countries focused on protecting their own populations. They prioritised vaccines, antibiotics and put surveillance-based biosecurity regimes in place, designed to stop typhoid from crossing back into high-income countries via travellers and migrants. This strategy was cheap in the short term but very costly in the long term.

Although governments and non-governmental organisations on both sides of the Iron Curtain provided infrastructural and medical aid to allies in the so-called “developing world” during the Cold War era, typhoid did not feature high on the international agenda and was frequently superseded by other, more prominent, or fast-burning diseases like malaria and smallpox. Meanwhile, a mix of population growth, resource constraints and inadequate access to water, sanitation and health infrastructures created perfect breeding grounds for typhoid in the Global South. This also led to an over-reliance on comparatively cheap antibiotics to keep the disease in check. The result was an evolutionary surge of increasingly antibiotic-resistant typhoid strains.

This surge had been predicted. Resistance against the first antibiotic treatment for typhoid, chloramphenicol, had been reported within two years of the antibiotic’s first use against typhoid in 1948. Individual strains had also proven resistant against ampicillin within years of its 1961 launch.

Typhoid outbreaks increase

In 1967, researchers in Israel and Greece reported the isolation of typhoid strains with transferable chloramphenicol resistance. In the same year, British experts analysing typhoid strains from Kuwait detected transferable resistance not only against chloramphenicol but also against ampicillin and the tetracyclines. Five years later, an explosive typhoid outbreak that infected more than 10,000 people in Mexico City was resistant to several antibiotics including chloramphenicol—but fortunately not ampicillin. India and Vietnam reported parallel outbreaks.

Western responses to the outbreaks were ad hoc, again focusing on biosecurity measures like traveller surveillance and vaccination rather than on concerted international campaigns to combat the factors driving the surge of resistant typhoid in low-income areas.

In response to the outbreaks in India and Mexico, Western media commentators accused local populations of relying on antibiotics too much and using drugs inappropriately. Rarely addressed were the underlying factors, such as insufficient access to affordable healthcare, clean drinking water and effective sewerage systems—or the fact that many of the drugs in use had been exported by Western producers.

The prioritisation of national biosecurity over collective responsibility was echoed in government policies. Western countries and non-governmental organisations provided limited laboratory, sanitation, and medical aid in response to natural disasters and acute outbreaks. But international support remained inadequate to compensate for existing financial, infrastructural, and organisational constraints or to keep up with population growth and rapid urbanisation in endemic areas.

Meanwhile, concerns about the import of resistant “foreign strains” encouraged governments to devote significant resources to monitoring borders, travellers, and migratory populations for typhoid. Resulting monitoring efforts remained influenced by culturally ingrained stereotypes of typhoid as a disease of uncivilised people. In response to the Mexican outbreak, US public health officials not only focused on monitoring non-American strains and intensifying the community surveillance of people with “Hispanic surnames” but also highlighted risk factors like alleged “Hispanic hygiene habits” even though no empirical research was conducted to test whether these culturally-biased associations were true.

Continued neglect

The neglect of international efforts to combat typhoid on a global level carried over into the 1980s. This neglect was facilitated by international disease surveillance networks with large coverage gaps in areas outside the Global North. It was also the result of overconfidence in newly available treatments.

Marked by political and economic instability, the following two decades experienced a rollback of healthcare provision. This happened in large parts of the Soviet sphere and also in Western-affiliated “developing countries” undergoing World Bank monitored programmes to implement free market policies. Without access to effective and affordable healthcare and sanitary services, local populations frequently turned to cheaper antibiotics to control disease.

The result was a further global surge of antimicrobial resistance right at a time when an increasing number of international drug companies began to withdraw investment in new antibiotic development due to a lack of profitability. In 1988, a typhoid outbreak in Kashmir proved resistant to all three first-line antibiotics. Similar outbreaks were soon reported from Shanghai, Pakistan, and the Mekong Delta. New genetic sequencing revealed that a large part of rising antibiotic resistance was associated with the spread of a specific haplotype (a distinct group of genes clustered together on a single inherited chromosome).

Designated “H58”, organisms with this haplotype were undergoing a significant population expansion and conferred bacterial resistance not only against older first-line drugs but increasingly against new reserve antibiotics (like the fluoroquinolones and cephalosporins). By the late 1990s, the majority of strains isolated from a large-scale outbreak involving thousands of patients in formerly Soviet Tajikistan proved resistant to the fluoroquinolones. Sporadic cephalosporin resistance was reported from the early 2000s onwards.

The current Pakistani outbreak of XDR typhoid, which began in 2016, is caused by a variant of H58 that is resistant to all antibiotics (except azithromycin) commonly used against typhoid. Total pan-resistance to locally available drugs may only be one mutation away.

A new generation of vaccines

This uneven history shows the limitations of making policy on a national or regional level when it comes to curbing border-crossing threats. Whether we choose to justify action out of ethical considerations of collective responsibility or out of enlightened self-interest, the global threat posed by XDR typhoid and the conditions producing multiple resistant pathogens like it will only be overcome by more—and not less—international involvement.

Fortunately, a new generation of vaccines could now provide a crucial cornerstone for new international efforts for typhoid control. New typhoid “Vi conjugate vaccines” (TCVs) have overcome many hurdles. One of these vaccines (Typbar-TCV) only requires a single dose, is approved for children of six months and older (previous vaccines weren’t suitable for children under two) and was recently licensed in India, Nepal, Cambodia, and Nigeria. Other advanced TCV candidates are in manufacture and development.

These vaccines are no longer primarily designed to protect foreign travellers and limit acute outbreaks. They are also no longer being developed in areas of the world that need them the least; Typbar-TCV was developed and manufactured by the Indian company Bharat Biotec.

And in another twist of history that takes us back to Alice in Wonderland’s Oxford, Typbar-TCV was not tested on Indian but on British populations. In 2017, around 100 closely-observed participants drank live typhoid bacteria to test the vaccine for safety and efficacy. The carefully controlled Oxford “outbreak” is the largest recently recorded typhoid outbreak in the UK and provided critical data for the WHO’s decision to recommend the vaccine in 2018. This situation is a reversal of the current trend for vaccines created in high-income countries but tested in low and middle-income countries.

The long-term implications of this geographic shift of vaccine development are significant. As Samir Saha at the Child Health Research Foundation at Dhaka, Bangladesh, describes it:

We Bangladeshis, like any other low middle-income countries, usually receive a vaccine after 20-25 years of its introduction in the developed world—pneumococcal vaccines took 20 years and Hib vaccine took 25 years to travel here. This is the first time that a vaccine will be first introduced in a country where it is needed the most.

A bio-social problem

The arrival of the new vaccines is fantastic news during a time of failing antibiotics. But their roll-out will have to be accompanied by other measures if we are to move towards sustainable control of diseases that cause intestinal illnesses in low-income countries. As the long history of typhoid makes clear, effective health strategies have to integrate all available aspects of typhoid control.

Since around 1900, vaccines have played an important role in protecting travelling populations and military personnel from typhoid. But wider control has always also depended on the provision of robust drinking water and waste-water systems to prevent typhoid from spreading, an effective surveillance network to monitor typhoid incidence and the targeted provision of effective high-quality drugs to treat the disease. Over-reliance on any one intervention has repeatedly undermined wider control efforts.

At the same time, control efforts have to take place at multiple levels. Not only is there ample proof that ambitions for typhoid control cannot be limited to high-income countries alone, there is also strong evidence highlighting the importance of collaborations between local institutions for typhoid control. While 20th-century aid efforts primarily targeted nation states, a close look at the early “heroic age” of typhoid control reveals the importance of municipal and local actor coalitions in developing effective locally-tailored sanitary solutions. The provision of cheap affordable credit to facilitate initiatives with local buy-in was equally important.

Source: Read Full Article